I recently attended one of the many 'AI Summits' aimed at developers, entrepreneurs, technology founders and investors in the AI space. In discussions about the future of AI, international standards (ISO, IEC, CEN-CENELEC, IEEE, … Continue Reading ››

I recently attended one of the many 'AI Summits' aimed at developers, entrepreneurs, technology founders and investors in the AI space. In discussions about the future of AI, international standards (ISO, IEC, CEN-CENELEC, IEEE, … Continue Reading ››

Launch of the AI Assurance Club web site

Over the years I've been involved in various web site launches. The latest one, for the AI Assurance Club, feels special since I was closely involved with all of the content, and developed the site in SquareSpace. The new site brings together all the work of the Club since its inception in … Continue Reading ››

Is this the AI Act’s Y2K Moment?

Back in the late 1990's, a small group of people realised that storing the year in a two digit format might cause some problems at midnight 31st December 1999. As the date flipped over from 31-12-99 to the very odd looking 01-01-00, would critical IT systems fail? After all, the date has never gone 'backwards'. … Continue Reading ››

Digital Trust – Trusting others in the digital realm

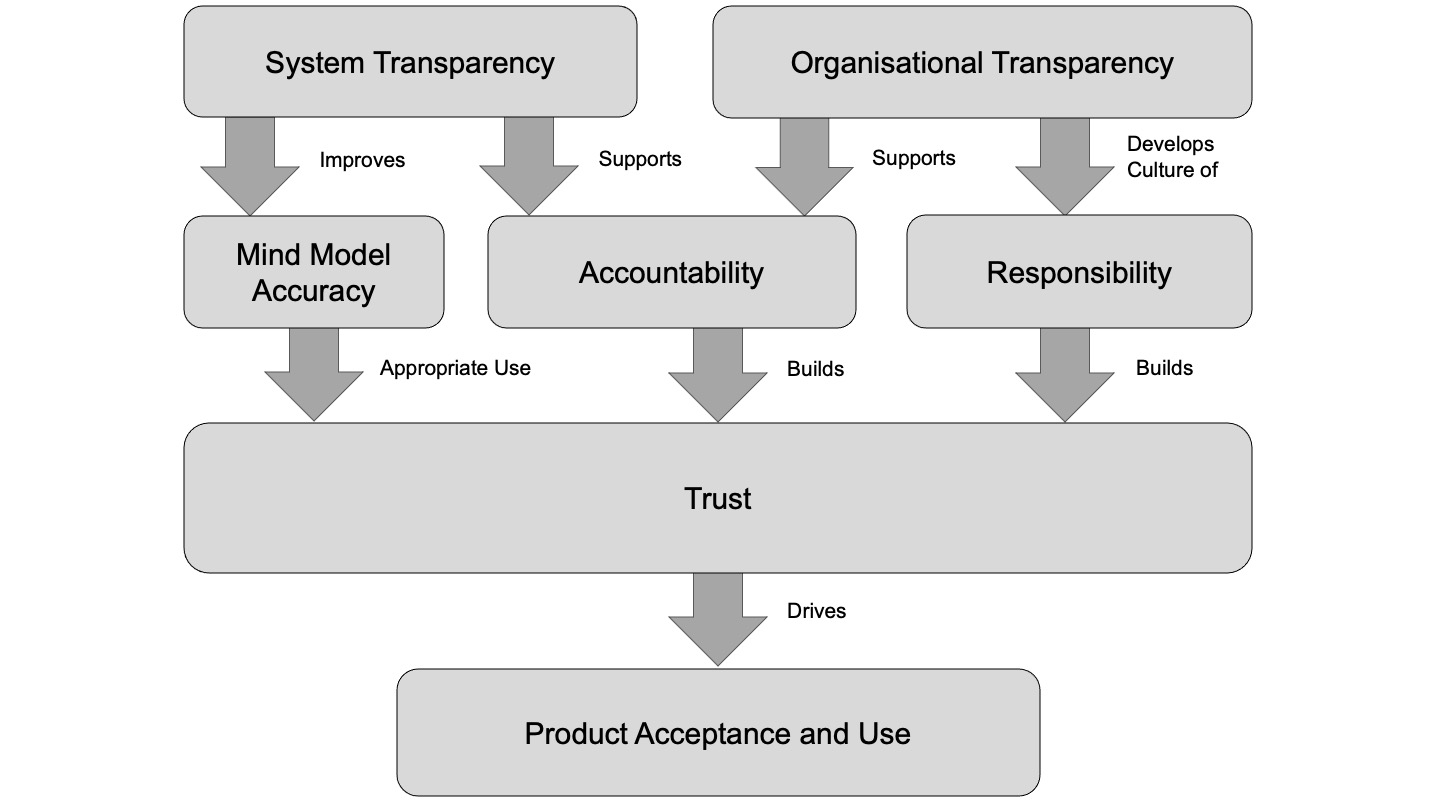

In 2020, I wrote about trust within the context of accountability, responsibility and transparency. You can find that content in my book, but here is a diagram outlining how trust is driven by both system and organisational transparency.

Continue Reading ››

Continue Reading ››

Humans are more than biological creatures

Earlier this month I attended one of the regular BRLSI philosophy talks. Andreas Wasmuht prepared an excellent introduction to the work of Martin Heidegger, specifically his work Being and Time (first published in 1927). This post is not specifically about that work, but it got me thinking again about how much more … Continue Reading ››

Taking AI Deployment Seriously

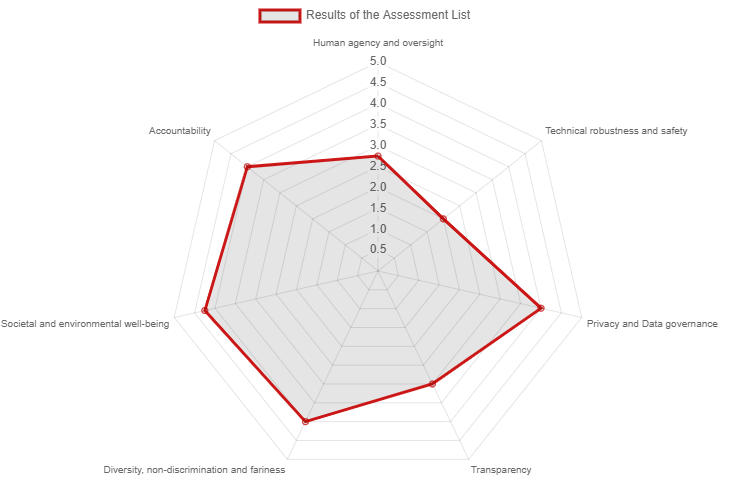

I'm just back from Copenhagen where we ran a roundtable on AI assurance, asking how we can best use tools to reduce risk and address compliance with upcoming regulations, particularly the European AI Act. The speaker details are in the link, and there will be a full video too. We had about … Continue Reading ››

Moving on

Yesterday was my last day of employment at The University of Bath. I have taught Robotics and Autonomous Systems for four years, 12 Semesters. It's been a great experience, especially the contact with students in lectures, labs and tutorials. But now it is time to move on. The workload is relentless and gruelling. The last … Continue Reading ››

IET Responsible AI Webinar

Delighted to be part of the programme @IETevents new webinar series on Responsible AI, exploring cutting-edge technology and future applications over seven sessions in November and December. Booking is open now: http://ow.ly/Kcdg30rWpTu